Feed Historical Manuscripts to the AI Bot? Think Again.

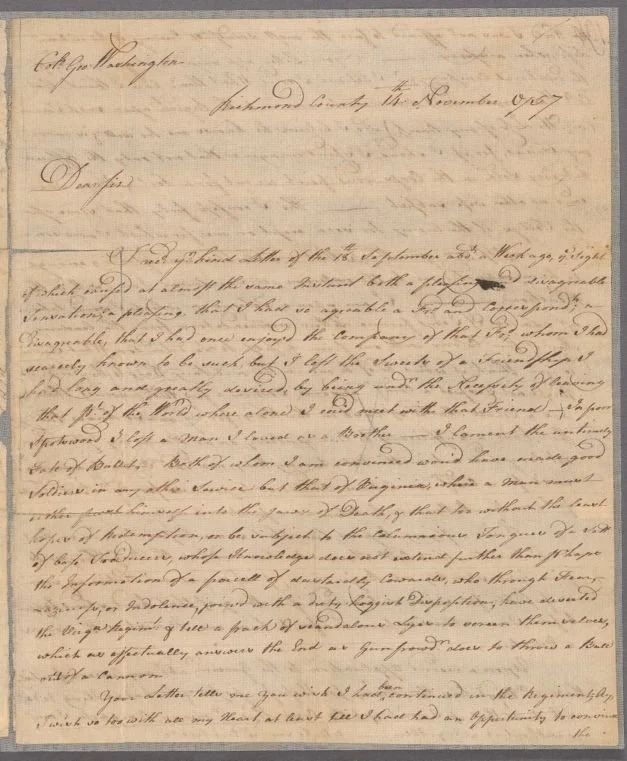

A Letter from William Peache to George Washington, 1757 (New York Public Library)

Documentary Editing’s Travails, Accomplishments—and Failures

I spent twenty years of my professional career in the documentary editing field at the Papers of George Washington at the University of Virginia. For the final six years of that period, 2010-2016, I was the project’s Editor-in-Chief (I preferred to say Director).

Throughout that time, I came to understand the hard and meticulous work necessary to transcribe, edit, annotate, and publish the vast (approximately 130,000 piece) collection of letters to and from George Washington, and also recognized the public’s growing impatience with the lengthy processes involved in preparing these documents, and making them widely available outside a paywall.

Let’s address one criticism at the start: the process was long—too long. The Washington Papers were established in 1968; other major Founding Father and related projects had been around since the 1950s, and even today many of them remain unfinished despite hundreds of millions of dollars of government and private funding (still, a pittance compared to AI investment these days). Most if not all of these projects broke repeated promises on their completion dates.

Next, let’s confess a few other unpalatable truths. First, the universities at which these projects were usually based often milked them for all they were worth—for example, skimming off huge chunks of “indirect costs” from federal grants and diverting them to purposes barely if at all related to the projects’ upkeep. Better cafeteria french fries, anyone?

Second, I personally overheard several documentary editors express their determination to drag their feet on editing and publication. They did so in order to extend their job security, even if it meant breaking deadline commitments to public and private funders. There’s nothing scandalous about this—it’s human. When a project ends, employees (largely untenured research faculty, or staff) lose their jobs. I heard editors express hopes of extending their projects until retirement age, and who can blame them?

Third, the ‘Bartleby the Scrivener’ mentality was pervasive in the field of documentary editing, and I’ve got no reason to believe that isn’t still the case. By this I mean the reluctance or outright refusal of many editors to engage with the public. Personally, I always felt that receipt of public funding behooves editors to share their knowledge with teachers, students, and others. I often felt like a voice in the wilderness in this regard—many editors told me this wasn’t their job, and they didn’t have the time or (to be honest) the inclination for it. They wanted to edit and be left alone.

The “big documentary editing project” system, then, was/is deeply flawed and increasingly looks obsolete. But what were/are the alternatives?

From my years as a young documentary editor (personal photo)

Crowdsourcing and Other Shortcuts Ending in Swamps

For many years in the early 2000s, there was talk of Congress deluging the big projects with money, on the understanding that the projects would finish their work rapidly and make their content freely available online.

This was always going to be a non-starter, given the lack of space, trained labor, simple economies of scale, and the disinclination of editors to work themselves out of jobs—I won’t get into detail. One positive result, however, was the creation of Founders Online, making the Founder projects freely available online (with preliminary edits of some documents), thanks especially to NARA’s National Historical Publications and Records Division.

Still, this wasn’t enough for many.

So—let’s find a short cut! One pervasive idea—now resurrected through AI—was to use O[ptical] C[haracter] R[ecognition], or OCR, to read and transcribe documents electronically via designated software. Problem was, despite promises by the tech hucksters of the day, none of these programs ever came close to working with anything approaching accuracy.

Another idea, madly popular for a long time (and still so, in some places) was crowdsourcing. The idea hinged on the assumption that thousands of people out there would love to read and transcribe documents, for fun, on a volunteer basis—no pay, in other words. I always thought the concept sheer idiocy, for two reasons: one—exposed to the long-term tedium of editing thousands of documents, these people would soon quit. Second, as untrained amateurs, they would produce poor-quality work. As any manager who has had to deal with untrained or inept subordinates (as I definitely have) could tell you, it’s more efficient to go it alone than to correct and oversee their work.

Do I feel vindicated at crowdsourcing’s inevitable demise? Sort of. But I’m not happy, for a worse idea is now taking hold: the wholesale replacement of documentary editing with AI.

A Bot (Library of Congress)

AI: Making Challenges Insurmountable

AI is, fundamentally, a shortcut intended to circumvent learning and other mental exertion, and to generate astronomical profits for tech giants that have invested mind-boggling amounts of money in its success. And it’s incredibly seductive. Big Tech is deliberately working to insinuate AI into every corner of public life—very much including schools—and to foster public dependence upon it in every sphere—again, not because it necessarily works well, but in order to generate return on investment. They cannot afford to fail.

I won’t name names here, but I’ve been appalled recently to see museums and manuscript repositories crowing triumphantly (naively?) about how they will use AI—in partnership with well-paid, Mephistophelean, private contractors—to make millions of untranscribed manuscripts available to the public. Geez, that sounds awesome!

Too bad it’s a con.

Surely most, maybe even all, of these repository overseers are well-meaning—they want to make accessible documents that would otherwise remain unavailable (or indecipherable by generations unaccustomed to cursive writing). But that doesn’t change the fact that the AI hucksters are salespeople selling a product—not really different in essence from those late-night TV ads promising to make a housemaker’s life easy-peasy. Smart people shouldn’t fall for it, but they always do.

This is just a blog post, not an academic article. So instead of belaboring readers with scholarly parsing and nitpicking, I’ll just summarize a few things:

Let’s start with one premise that I will be so bold as to state as a fact: text—a letter, an article, a book, even an email—isn’t just a collection of data. It’s an inherently creative, individual, and even unique production that can only fully be understood in form, framework, and cultural and historical context. AI, by contrast and in its very nature, can only conceive of text as data—a collection of letters, words and sentences that convey a data-based meaning.

Specifically, handwritten, pre-twentieth century manuscripts were meant to be conveyed on paper, and both composed and consumed subjectively, not as datasets. I have never seen a machine that can transcribe a manuscript with anything close to the accuracy that an intelligent or trained human can. Why? Because a human can instinctively interpret what was intended to be a communication to another human. So even if we concede (which I don’t) the AI hucksters’ insistence that their machines can transcribe with close to total accuracy, they cannot even begin to approach understanding.

There are so many factors to consider here, and I can only briefly touch on them. Can a machine, or language model like AI, interpret the subtleties of handwriting, its artistry or how it changed over time; what these changes suggest about intellectual growth or things like haste, distraction, stress, sickness and fatigue? No, not even close. Can AI detect and comprehend emphasis in writing tones, placement, punctuation, letter structure, doodles, emendations and edits, context, and so on? These, among many others, are the things trained editors provide to promote not just recognition (which AI can do, to a degree) but comprehension and understanding. They do this through the priceless annotation appended to these documents in published editions—none of which an AI could even come close to mimicking.

Finally, knowing the vast complexities of 18th century handwriting, spelling, and orthography as I do, I insist that for machines, manuscripts will always be incomprehensible. Machines and language engines can never fully, even remotely comprehend human communication with other humans. Think back to your last chat with a loved one or friend. Did you exchange data? Or was it something more?

Full Stop. (And ditch your chatbot).

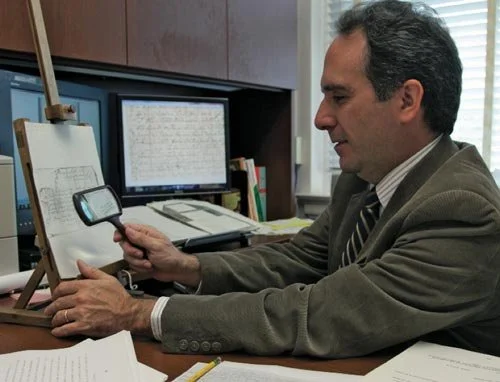

Me as Director of the Washington Papers (American Heritage, “An Ignoble Profession,” 2011).

Conclusion: So What?

Still, who cares? Fewer and fewer people read cursive every year. Isn’t using AI to translate these documents for public consumption, even imperfectly, better than doing nothing at all?

Well, no. Just, NO.

First, the money that you (library, museum, archive, foundation) filter off to techbros who know nothing of history, documentary editing, contemporary context, etc., could also be spent on hiring, training, and employing humans who might be slower, but can do the job more accurately, and with human understanding that can be conveyed in annotation and other means. In other words, you can hire instead of fire. (Don’t worry about firing the machine. It doesn’t have feelings to be hurt, or children to feed). Just: tell your human employee not solely to edit, but engage (something AI also can’t do).

Second, AI-produced content will always be not just imperfect, but misleading; and misleading information, repeated and eventually accepted as truth, will always poison the well of human knowledge. As my late lamented mentor at the Washington Papers, Dr. Bill Abbot, told me, no information is always better than bad information. People naturally will avail themselves of the information that’s easiest to get, not taking the time to see it’s unreliable. They will, in other words, come to take AI-generated errors and lies as truth.

So let’s imagine a world in which archivists, librarians, and humanities scholars come to recognize AI for the scam it is. Then what? Personally, I’d love to see a reinvention of documentary editing. Using private, not government funding, hire human editors to do the job right, efficiently, holding them to deadlines on penalty of layoff, outside academic systems that siphon funds and incentivize sloth, and insist on public representation and engagement to enrich non-academics.

Don’t tell me it’s too expensive. Tech has invested trillions of $ per year in AI. Pay a human to do work you can trust, instead of enriching a bot’s handlers to produce rot. And since AI junk amounts to less than zero, even nothing at all will always be its master.